Environment

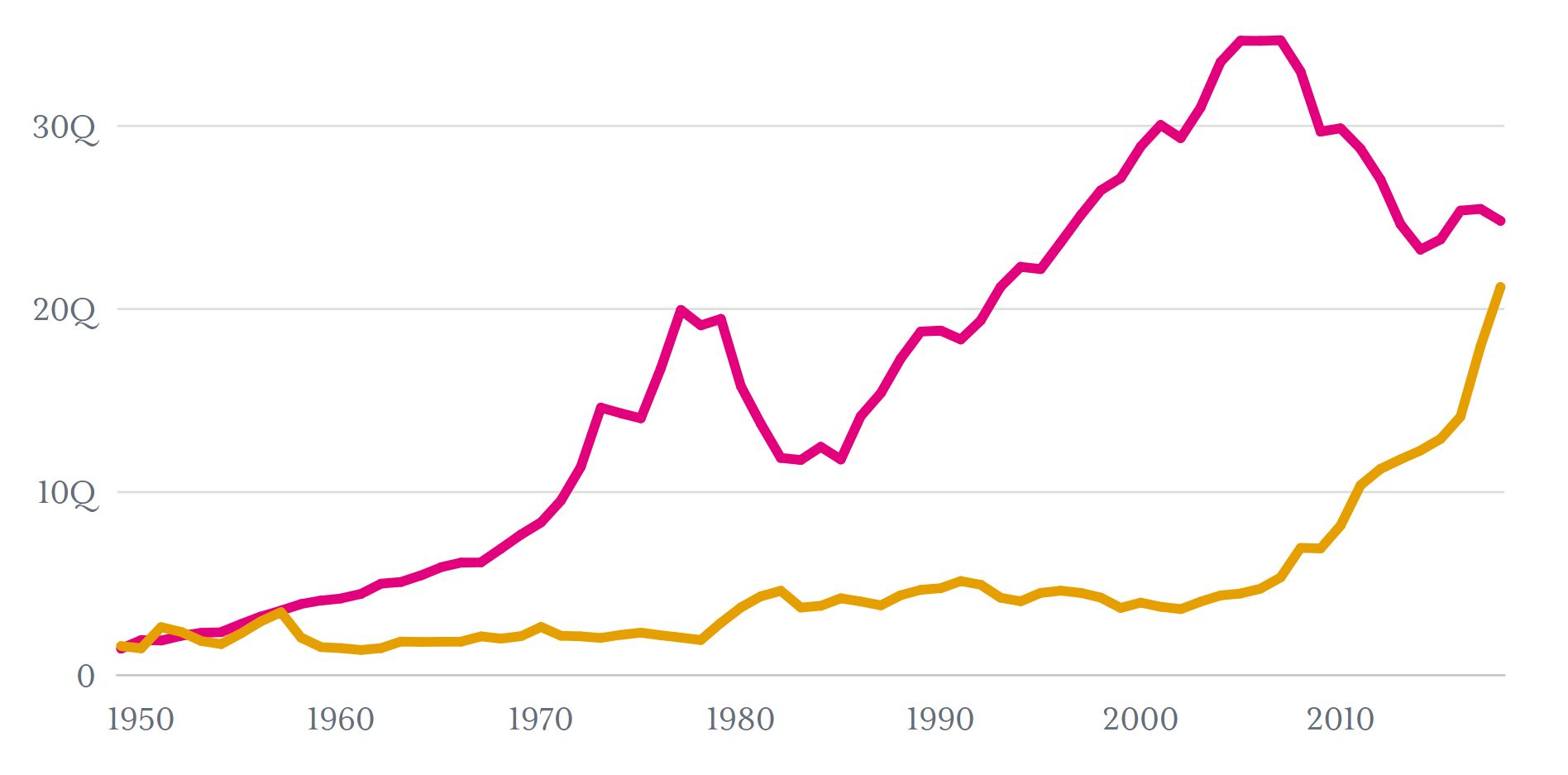

How energy independent is the United States? A look at oil imports, exports and production

Decades after the launch of the first arcade game in 1971, the popularity of video games today is undeniable.

Globally, 2.2 to 2.5 billion people were estimated to be gamers in 2017. In the US, 66% of people over the age of 13 played video games in 2018.

Modern video games use sophisticated graphics and elaborate world-building to provide gamers with the best possible experience. A video game of today, however, could use 50 times the electricity used by Pong, a popular arcade game released in the 1970s.

To better understand the impact that video games have on energy consumption, the California Energy Commission funded a study on video game usage in the state. Using market research and in-lab tests of video game equipment, the study analyzed variations in gaming behavior, hardware, and game type to propose potential strategies for companies and individual gamers to minimize their energy use.

Results were released in a 2018 report. Software strategies and gamer access to information about energy use were identified as crucial to reducing video game energy consumption.

In 2016, there were 15 million gaming platforms, meaning home computers, home consoles, and streaming devices, in California. These platforms collectively consumed 4.1 terawatt-hours per year, equaling the combined amount of energy used by dishwashing machines and freezers in the state. California gamers spent $700 million[1] powering these systems.

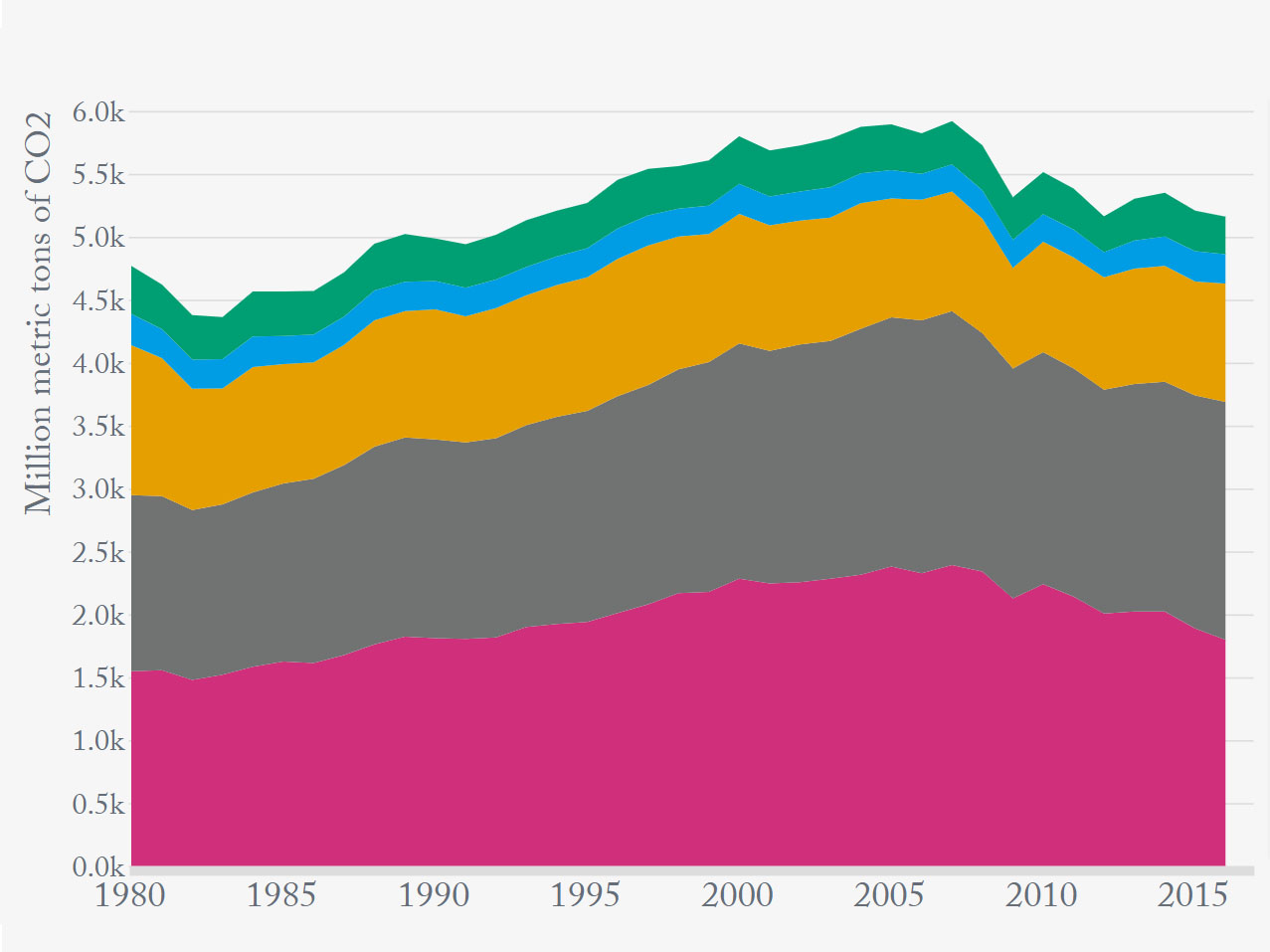

Gaming was responsible for 1.5 million tons of CO2 emissions, which would be equivalent to the emissions of more than 320,000 gasoline-powered passenger vehicles being driven for one year. In 2015, about 5% of the state’s residential energy use among investor-owned utilities came from gaming.[2]

There are three broad categories of gaming systems that gamers use: personal computers (PCs),[3] which includes desktops and laptops, consoles, and media-streaming devices. The equipment that gamers use varies based on their gaming system. For example, consoles mostly need a television or monitor to work. But personal computers can have a large range of gaming accessories.

To account for this, the report used price and computing power to categorize PCs used for gaming into entry-level, mid-range, and high-end. The report also categorized gamers into light, moderate, intensive, and extreme groups based on the number of hours spent gaming.[4]

The equipment that gamers use, and consequently the level of energy consumed, vary based on their gaming behavior. High-end PC gamers tend to have more accessories that use more energy compared to those playing on home console gamers for example.

Video game energy usage is influenced by numerous factors including hardware, software, the type of game, and user behavior.

Because energy usage fluctuates based on how each of these factors interact with one another, it’s difficult to quantify all the possible effects. However, the report identified some general energy-saving trends.

The report identified mid-range and high-end desktop computers as the highest per-unit energy consumers. Generally, consoles use less energy than desktop computers. Media streaming devices such as Apple TV consume the least energy, but cloud-based gaming services can more than double the energy usage for those devices.

Additionally, there’s a wide range in power use for systems while they are idling or in standby mode. Inefficient systems can use as much energy idling as during active gaming sessions. This may partially be attributed to the efficiency of the system’s graphics processing unit (GPU). The GPU is responsible for 45% to 77% of a gaming system’s total energy consumption while in gaming mode and 12% to 33% during idle mode.

Other accessories such as high-resolution 4K display monitors, audio equipment, and VR headsets also contribute to a gaming system’s total energy usage, usually by making the GPU work harder.

High-resolution 4K monitors gobble up a considerable amount of power. Audio equipment uses less power, unless it’s used for long periods of time. Some of the VR headsets researchers tested used 38% more power than a 4K monitor. But other VR headsets used 15% less energy than those displays.

The researchers hypothesized that this was due to those headsets using something called foveated rendering. Foveated rendering is a technique in which the VR headset drastically lowers image quality in the user’s peripheral vision.

Software settings can help gamers reduce their energy usage without negatively impacting the gaming experience.

For example, enabling VSync, a setting that matches the graphics processing rate with the monitor’s refresh rate, saved 14% to 39% more energy. Similarly, underclocking, or adjusting a computer’s timing settings so that the CPU and GPU run at a decreased clock rate, decreased power use by up to 25%.[5]

Finally, dynamic voltage frequency scaling is a software technique that adjusts the power used by a CPU or GPU to correspond with the level of power that’s needed to render a game’s graphics. Average energy savings were around 20% when using this technique.

The game that is played can also affect energy usage. Some games offer frequent pause points that allow gamers to save their progress and shut off their screen rather than leaving it idle, while others do not.

Interestingly, in its analysis of 21 different popular games, the report found that the genre and complexity of the game played was not a reliable predictor of energy consumption.

Simpler games such as Candy Crush or Sims 4 did not consume less power than more elaborate games, and in some situations, consumed more energy than high-fidelity games such as League of Legends and Skyrim.

Gaming behavior also significantly contributes to energy, especially the amount of time gamers spend actively gaming, versus idling, or turning their screens off.

Light gamers, those spending less than 15 minutes a day gaming, have their systems in idle or standby mode for most of the day. But even the most extreme gamers spend more time in standby than in active gaming.

Considering all the contributors to energy usage, the researchers calculated a theoretical worst-case scenario. An intense gamer with a high-end PC, overclocking their system,[6] while using three 4K displays, and cloud-based gaming would consume 2,560 kWh per year. This worst-case scenario would be more than double the average energy usage for a high-end PC user.

While improving energy efficiency without adversely affecting game performance is a delicate balance, hardware and software developers have improved energy efficiency in the past, even within a single generation of consoles. The Xbox 360 and PlayStation 4 were released in 2005 and 2006 respectively. The 2012 version of those consoles used at least 50% less energy during gameplay than the original models.

But the report points out that consumers don’t have a lot of information about how much energy is used while gaming. This makes it difficult for gamers to consider ways to save energy in the first place.

The researchers called on developers and manufacturers to provide more detailed and specific information about how much energy is used during gaming. They suggested gamers could even be incentivized to reduce energy usage as part of their game experience.

Learn more about the environmental challenges that the US faces as a country.

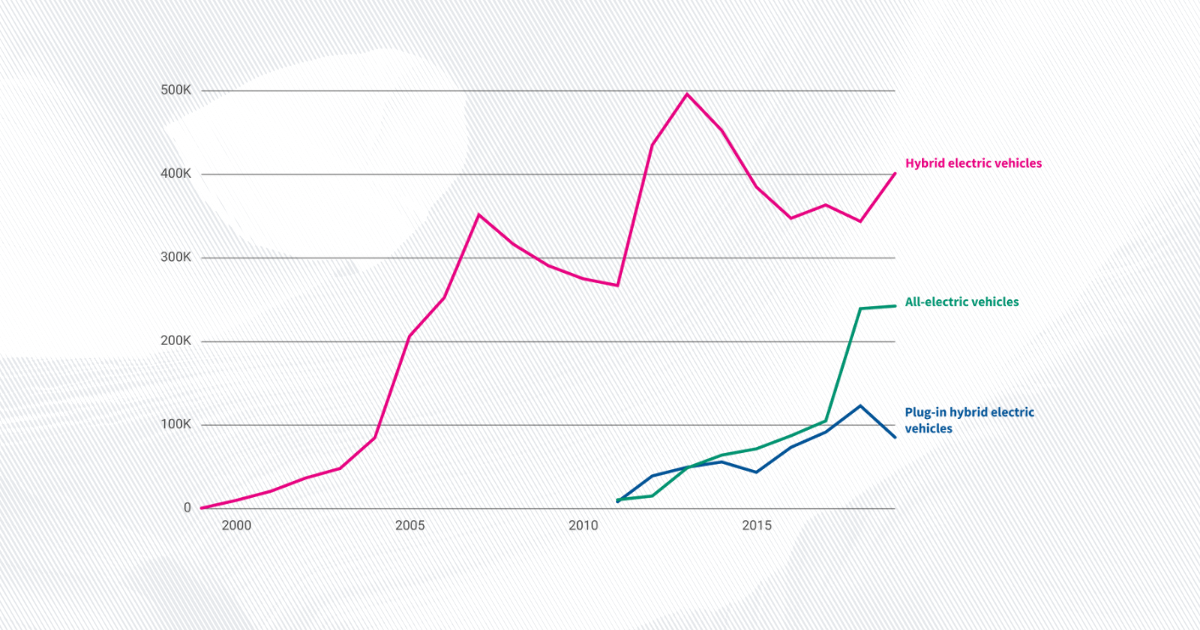

For a fuller picture of climate in the US, read about emissions electric cars produce, and get the data directly in your inbox by signing up for our weekly newsletter.

This only includes the cost of the electricity, not the cost of buying the equipment.

Investor-owned utilities (IOUs) are private companies such as Pacific Gas & Electric. In 2017, IOUs serve at least half of California’s population and approximately 72% of the US population.

Personal computers include Windows and Mac OS.

Light gamers were those who gamed for 0.1 hours or less per day, moderate users were those who gamed for around 0.9 hours a day, intensive gamers gamed for 2 hours a day, and extreme gamers gamed for 5 hours a day. These groups were formulated based on industry expert knowledge rather than survey data, which was not available at the time that this study was conducted.

The amount of power saved changes according to the system and paired display used.

Overclocking is adjusting a computer’s timing settings so that the CPU and GPU run at an increased clock rate. Gamers will overclock to decrease load times and improve the resolution of the screen.

Environment

Environment

Environment

Environment

Newsletter

Keep up with the latest data and most popular content.